The hard truth when you are trying to drive new business outcomes is that you won’t know if something is working until you see it in action. When you establish workflows and habits that prepare for an uncertain future, that future comes a lot sooner than it can when you try to control it. Speed alone isn’t the goal. It’s creating workflows that allow the team to embrace the process of experimentation.

Until it’s working, we’re all guessing. We don’t know how valuable a feature is until we test our hypothesis. When you enable a culture of continual learning and experimentation, you get the fast, frequent, and high-quality information flow throughout your value stream. But first, you’re going to have to think…smaller.

Get Smaller

Part of the value proposition for automating a CI/CD pipeline is to remove burdensome and manual work from creative people who should be experimenting with their creative ideas. With this in place we can get feedback on those ideas at a much faster pace.

With the CI/CD pipeline in place, product owners can—and should—be making the asks of the team smaller. Now that you can safely deploy as often as several times per day, giving teams user stories that will take two weeks to complete just means it’s that much longer before you get feedback.

As a product owner, you shouldn’t be waiting until the end of a sprint or a demo to give that feedback to the team. Smaller user stories that get into production quickly give you faster and better access to the critical data that informs what your next decision is going to be. With smaller batch sizes, you can validate the quality of the new feature, and get it into the CI/CD pipeline to move it into production as quickly as possible.

Small batch sizes provide two different levels of value. One, small batches go through systems faster. Two, they reduce variation. Just by virtue of being a smaller amount of work, there are literally fewer things that can go wrong. It’s much easier to debug a day’s worth of code than it is to debug a month’s worth of code.

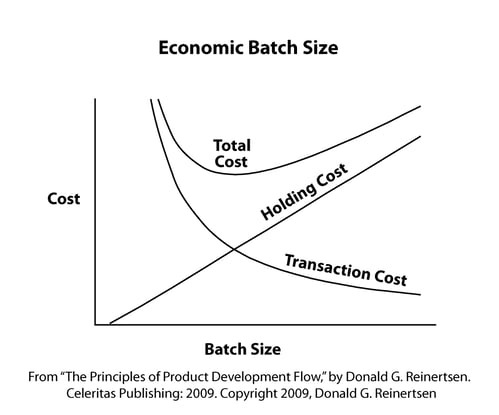

Optimal batch size is a function holding cost and transaction cost. If it's expensive to deploy changes, you have a high transaction cost. Therefore if you have high transaction costs, small batch sizes aren't economical.

Invest in a CI/CD pipeline automation strategy to lower those transaction costs and make it cheaper to have small batches. By reducing those fixed costs, you can predict what it’s going to cost to deploy every day vs. weekly, monthly, or (yes, this still happens) quarterly.

Creating that infrastructure and shrinking down batches empowers your best thinkers to do what they should be doing all the time: Conducting experiments.

Become a Scientist

What if, instead of months-long deployments that turned into a messy, buggy backlog for the security team, you were running daily experiments? Daily experiments give you the immediate data you need to build the features your customers need. The longer those experiments take…the more you’re adding on to the overall delivery schedule, and the less likely you are to push the features your stakeholders need, want, and, eventually, use.

Let’s talk about hypothesis-driven development. Good innovation is driven by curiosity. Until you build it, until it’s live, until you get that critical data you need: everything is an experiment. Never assume that what you build will bring the intended value that you assume it will, and don’t let too much time pass between when you run your experiment and when you get your results.

Hypothesis-driven development should look like this:

- We believe that this capability

- Will result in this outcome

- We will know we have succeeded when we see a measurable signal

Here’s an example, by way of a metaphor. A good friend of mine is a long-distance rifle shooter. He works with a spotter to give him instant feedback on how far off the target he is while he’s shooting. Makes sense, right? He works with real-time data to refine his skills while he’s practicing for competition.

Now, imagine trying to perfect your aim without instant feedback. Imagine him having to examine the target (which is yards away) after every shot just to get an idea if what he’s doing is working.

That’s how it can feel to us when we’re making business decisions blindly. We usually have some sense of how well what we’re doing is working, but no one should make business decisions in a vacuum.

Most modern product owners are familiar with the idea of the minimum viable product, or the MVP.

If you’re scoping an MVP that’s going to take a month or two to develop, that’s missing the mark of how an MVP should function. Instead of contextualizing an MVP as “the minimum amount of work we need for an entire product,” consider instead the minimum amount of effort you need to test and validate your hypothesis.

The bigger the MVP:

- The longer it takes to build

- The longer it takes to get any feedback

- The less value you get from the result

- The more variables you have to contend with

Remember that potato clock you built for your elementary school science fair? You had one variable you had to deal with. When you push a month’s worth of code into production, there’s that many more variables at play.

This is going to take time and dedicated resources (both human and capital). But it’s an investment that you probably can’t avoid making if you want to remain competitive.

Make the Investment

Before we analyze the breakdown of resources you’ll need, let’s talk about what you’re already losing. There is a hard cost with forfeited revenue. The longer it takes to get that feedback, the more likely the feature may have already decayed in value. What was a good idea today may not be a good idea tomorrow, and the best way to capitalize on good ideas is to test them as soon as possible.

If you’ve already automated your CI/CD pipeline, now you can start experimenting with how you’re going to use it to get more value. If you haven’t, it’s time to make some investments.

What’s this going to take and how can you contextualize those resources? Size the effort and discover and measure your return.

One: Calculate the ROI of Automation

Return = waste + lost opportunity

When you reduce the amount of time you spend on building new features that you might not need, you’re securing the ROI of that investment. Without it, how much cost is buried in waiting for deployments and how much revenue is lost in waiting for features to be shipped?

Organizations that don’t allocate resources to retool and improve are never going to realize the full potential of this process.

Two: Size the Effort

Cost = labor + lost opportunity

A good craftsperson always invests time in taking good care of their tools. You may have to allocate new or existing resources outside of your day-to-day operations, which can be a challenge for a lot of organizations that are already struggling to meet their current production obligations.

Invest the time you need to make this work. When you take that pause, and make that shift, then you are building a culture where you can make the best possible products. Take that time, and figure out the amount of human capital and actual capital you need to build this pipeline.

The best way to start is to make these activities a part of your daily habits. Expect this whole process of writing shorter user stories, automating the testing, and shortening your entire feedback cycle as a part of your normal delivery schedule. When you wrap all of that into your definition of “done,” it becomes integrated into your everyday work.

To dive in with the Sketch community and learn more about the importance of product ownership, register free for our upcoming webinar below!